The Challenge

At my recent employer(they are working with the top video media company in th world), we faced a high-friction operational bottleneck which is generating accurate, tone-consistent video synopses and metadata at scale. Traditional manual workflows were too slow, but raw LLM outputs were too unreliable for professional distribution.

I was tasked with building a system that moved our team from content creators to AI supervisors.

The “AI-Native” Approach

Instead of building a simple “wrapper” UI, I architected a multi-step agentic workflow. I focused on the “Human-in-the-Loop” (HITL) model, where the AI does the heavy lifting and the human provides the final “judgment.”

- The Framework: The system doesn’t just output text; it generates multiple variations and then uses a separate prompt-chain to evaluate them against specific brand guidelines and technical constraints.

- The Interface: I avoided the “chat box” trap. I designed a high-density dashboard that surfaced the “reasoning” behind the AI’s metadata suggestions, allowing editors to review, tweak, and approve outputs in seconds rather than minutes.

- The Stack: As a designer-engineer hybrid, I moved at a founder’s pace—using Cursor for “vibe coding” and Supabase/Vercel to ship a functional prototype that the team could use in production immediately.

The Technical Architecture: Multi-Model Intelligence

To achieve professional-grade accuracy, I didn’t rely on a single “black box.” Instead, we architected a pipeline that combined best-in-class transcription with graph-based contextual mapping.

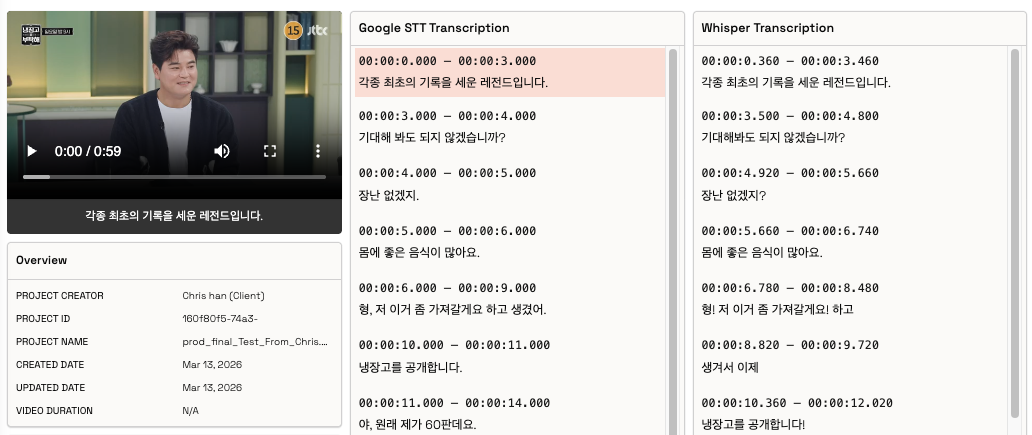

- Hybrid Transcription Engine: We utilized Google STT for its enterprise reliability and high-fidelity timestamps, coupled with OpenAI Whisper for its nuanced handling of diverse accents and technical jargon. By cross-referencing these outputs, the system achieved a baseline transcription accuracy that required minimal human correction.

- Contextual Intelligence via Neo4j: One of the hardest parts of video metadata is categorizing content within the IAB (Interactive Advertising Bureau) Contextual Taxonomy. Instead of a flat list, we implemented Neo4j to manage our contextual database. This allowed the AI to traverse complex relationships between genres, sub-categories, and brand-safe keywords, ensuring that a “Sports” video wasn’t just labeled as such, but was mapped correctly within the wider graph of “Endurance Sports” or “Team Competitions.”

- LLM-as-a-Judge Framework: We used the structured data from the Neo4j graph as “Ground Truth” to prompt-chain our evaluation layer. The AI would generate a synopsis and then cross-reference it against the Neo4j nodes to ensure all required contextual tags were accurately reflected in the text.